Should Meta change its policy on deepfakes?

It looks like the Oversight Board thinks so. Will its eventual decision matter?

Today let’s talk about two new cases involving manipulated media and platform policy. Both have to do with social media posts that seem to violate the spirit of rules against using digital media to mislead the electorate. But given their limited impact, it’s worth asking what protections against deepfakes platforms are in a good position to provide.

The first and more prominent case comes from Meta. On Monday, its Oversight Board announced in would hear a case involving a video of President Biden. The board described the post today on its blog:

On October 29, 2022, President Biden went to vote early in-person during the 2022 midterm elections in the United States, accompanied by his adult granddaughter, a first-time voter. After they voted, they exchanged “I Voted” stickers. President Biden placed a sticker on his granddaughter, above her chest, according to her instruction, and kissed her on the cheek. This moment was captured on video.

In May 2023, a Facebook user posted a seven-second altered version of that clip. The footage has been altered so that it loops, repeating the moment when President Biden’s hand makes contact with his granddaughter’s chest. The altered video plays to a short excerpt of the song “Simon Says” by Pharoahe Monch, which has the lyric “Girls rub on your titties.” The caption that accompanies the video states that President Biden is “a sick pedophile” for touching his granddaughter’s breast in the way he does. It also questions the people who voted for him, saying they are “mentally unwell.”

The first thing to note is that is not, strictly speaking, a “deepfake.” The video was manipulated, but rather than add events that never took place, it simply takes one event out of context and casts it in a false and sinister light.

The second thing to note is that almost no one saw this video, at least on Facebook. The post that the board agreed to reconsider had fewer than 30 views, the board said, and not one person had re-shared it. (I’m told it was posted by someone with a private account.)

One person who saw the video reported it to Facebook. Two layers of moderators declined to remove it. A user appealed that decision to the Oversight Board, and now the board will consider it.

Meta has an existing policy on manipulated media, and it’s written rather narrowly. The company removes a post if it is “the product of artificial intelligence or machine learning, including deep learning techniques (e.g., a technical deepfake)”; and the post would mislead an average person to believe that “a subject of the video said words that they did not say.”

Neither condition applies here. The video appears to have been edited without the benefit of generative AI, and it doesn’t put any words in Biden’s mouth.

At the same time, Meta’s manipulated media policy does express concern about misleading videos in general. The policy states:

Media, including image, audio or video, can be edited in a variety of ways. In many cases, these changes are benign, such as a filter effect on a photo. In other cases, the manipulation is not apparent and could mislead, particularly in the case of video content. We aim to remove this category of manipulated media when the criteria laid out below have been met.

My guess is that the board read this and thought that perhaps the criteria Meta uses to select manipulated media for removal ought to be expanded.

If you believe the Biden video should be removed from Facebook, though, how do you write the policy? It’s tempting to say that Meta should ban all “deceptively edited” videos. But how do you account for satire or parody? How do you decide what counts as “deceptive” in a way that you can scale to a global audience?

The current policy bans putting words in people’s mouth that they didn’t say — a narrow but binary distinction. The board looks to be considering a policy that would ban showing things that did happen, but imputing them with a false meaning. That’s a much more difficult policy to write and to enforce.

If Meta doesn’t change the policy, though, it has effectively created a loophole for bad actors to exploit. You might not be able to post your deepfake of Joe Biden on Facebook, but you can edit a real video of him to suggest that he’s doing all manner of things that he isn’t.

It’s difficult for me to imagine serious harm coming from this, for reasons I’ll get into later. And in the United States, we give people wide latitude to say insane things about the president. But if you’re a year out from a high-stakes election and you’re bracing for a flood of deceptive videos, it’s probably worth at least airing out some of these trade-offs.

It will be a long while before anything comes from this. It took the board five months to pick this case, and in keeping with the geologic time scale at which it operates, it will likely be next year before they make their decision. After that, Meta will get an additional two months to decide what, if anything, to do about the board’s opinion. (While it would be required to remove the post if the board ordered it, policy opinions are only advisory and Meta is free to ignore them. It often does!)

On the whole, I’m glad the board is paying attention to manipulated media. But whatever happens here, I wonder if Joe Biden was the right test case for rethinking Meta’s policies. Biden benefits from the fact that he gets daily coverage from an international press corps that will quickly debunk any deceptive videos that are going viral.

In the meantime, there are plenty of state and local officials — not to mention average people — who may suffer much greater harm from manipulated videos that spread on Facebook or Instagram without any pushback from the platform or the press. When the board pressure-tests Meta’s manipulated media policy, I hope it keeps those people in mind.

II.

Earlier this month I wrote here about how synthesized audio clips posted to social networks had roiled the election in Slovakia. This week, another mysterious audio clip hit the internet, this time targeting the United Kingdom’s Labour Party.

Morgan Meaker wrote about it at Wired:

As members of the UK’s largest opposition party gathered in Liverpool for their party conference — probably their last before the UK holds a general election — a potentially explosive audio file started circulating on X, formerly known as Twitter.

The 25-second recording was posted by an X account with the handle “@Leo_Hutz” that was set up in January 2023. In the clip, Sir Keir Starmer, the Labour Party leader, is apparently heard swearing repeatedly at a staffer. “I have obtained audio of Keir Starmer verbally abusing his staffers at [the Labour Party] conference,” the X account posted. “This disgusting bully is about to become our next PM.”

As of Tuesday, there was still some debate about whether the clip might be genuine. A fact checker interviewed by Wired said “there are characteristics of it that point to it being a fake,” though at the moment the tools we have for assessing the authenticity of digital media are limited and often inaccurate.

That said, I’d be suspicious of any potential bombshell that landed first on the page of a pseudonymous X account. (One who, incidentally, seems to relish the debate they have inspired over whether the clip is real or fake, and whose timeline bears all the hallmarks of a shitposter.)

Should this clip be identified as a fake, it’s the sort of thing that would be removed from Facebook, under Meta’s policies. But this is X, and while that company updated its own synthetic media policy in April, it’s no longer really clear day to day which of its rules are in effect or not. In any case, the post has not been removed.

It’s possible that recent high-profile cases of deceptive media are a fluke. There’s always going to be weird stuff floating around on the internet, and the impact from the most recent cases seems to be fairly limited.

My fear, though, is that it’s a sign of things to come — that as more people gain access to generative AI tools, and those tools continue to rapidly improve, platforms may soon be faced with more deepfakes than they know what to do with. And some of them may go viral before anyone realizes what is happening.

So far, the worst fears about deepfakes interfering with US politics haven’t come to pass. But around the world, incidents like the Starmer case continue to diminish trust in the information ecosystem overall. The lights are beginning to blink red. And fixing the problem will require a lot more than an Oversight Board.

My chat with Pinterest CEO Bill Ready

Recently Fast Company offered me the chance to sit down with someone who I wish I had met much earlier. Bill Ready, who became CEO of Pinterest last year, is a relative newcomer to social apps. Before joining Pinterest he worked at Google, where he was the company’s president of commerce. But he also oversaw one of the craziest social products ever developed — the Venmo friend feed — when he was that company’s CEO. And he has become a vocal critic of platforms that seek cheap engagement at the expense of social cohesion, while implementing various reforms that he hopes will make Pinterest a calmer and more inspiring place to spend time.

Pinterest has had its share of turmoil, including some ugly cases of discrimination and harassment that predated Ready’s arrival. But given the shakeups in the social landscape lately, I was excited to talk to the CEO about how he plans to seize the moment.

This was my favorite part of the conversation.

Bill Ready: I remember an early meeting at a company that had acquired one of my startups — I won’t name the company — and we’re talking about what we’re going to do, and they talked about “harvesting value from users.” I was appalled. Like, what? These are human beings. These are people.

I’ve got nearly half a billion users now. Part of my standard of how I grade myself is I imagine every single one of them looking back at me. If I sat down with them face-to-face, could I—with a straight face, with a clear conscience—explain my actions and say why I truly believe what I did was the right thing for them?

Casey Newton: Well, that’s a great story about your time at PayPal.

Ready: [Laughs]

You can read our conversation here.

Talk about this edition with us in Discord: This link will get you in for the next week.

Governing

In another attempt at regulating TikTok, some lawmakers have indicated support for the Guard Act, which could give the Department of Commerce more authority to ban the app. (Gavin Bade / Politico)

The largest distributed denial-of-service attack on record, an HTTP/2 Rapid Reset Attack, was detected by Amazon, Google, and Cloudflare in August. It was eight times the size of the previous record. (Jonathan Greig / The Record)

The European Commission is asking Bing, Edge, Microsoft Advertising and iMessage users whether the Microsoft and Apple services — including iMessage — should be required to comply with the Digital Markets Act. (Foo Yun Chee / Reuters)

European commissioner Thierry Breton threatened X with penalties should it be found to be in violation of the Digital Services Act, after reports of misinformation about Hamas’ attack on Israel were found on the platform. (Brian Fung and Donie O'Sullivan / CNN)

“Hacktivists” are increasingly mounting attacks on both sides of the Israel-Hamas conflict, targeting government websites and media outlets. (Lily Hay Newman and Matt Burgess / WIRED)

Industry

Google is now asking users to create passkeys for their accounts by default, making the transition from traditional passwords to passwordless logins that use on-device authentication methods. (Jess Weatherbed / The Verge)

X reportedly shut down a media-matching tool within Smyte, an internal tool used to combat misinformation, ahead of the Israel-Hamas conflict breaking out. (Erin Woo / The Information)

Users on X can now block replies from non-paying users, which could make it easier for misinformation to spread. (Richard Lawler / The Verge)

Bluesky’s latest update includes email verification and a system that will flag misleading links, in its latest efforts to amp up security and authentication. (Sarah Perez / TechCrunch)

TikTok rolled out a feature letting users post to the app directly from third-party editing tools, including Adobe’s creative suite, Twitch, and ByteDance’s own CapCut. (Sarah Perez / TechCrunch)

Adobe announced at its MAX conference that its generative AI image service Firefly has updated models which will improve human rendering and create more realistic images. (Frederic Lardinois / TechCrunch)

An analysis of 3,000 AI images generated by Midjourney found that the AI system still leans heavily on stereotypes when it comes to national identities. (Victoria Turk / Rest of World)

Correction, 5:36PM: The item about Smyte originally said X had shut it down completely; in fact, it only shut down one part of the tool.

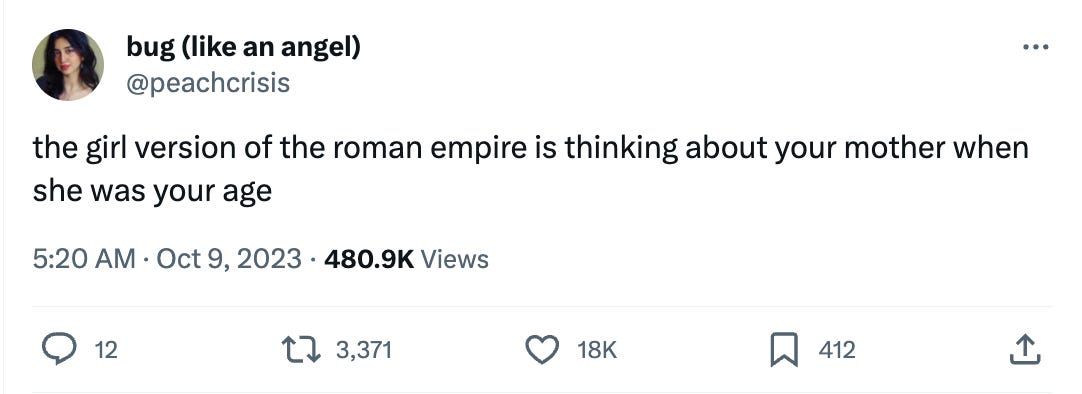

Those good posts

For more good posts every day, follow Casey’s Instagram stories.

(Link)

(Link)

(Link)

Talk to us

Send us tips, comments, questions, and Biden deepfakes: casey@platformer.news and zoe@platformer.news.

Thx for the post. In regards to the deception video of Biden: Stating publicly that someone is a pedophile is a clear case of defamation/libel in most jurisdictions I believe. There must be some terms in Facebook's T&S that covers such as situation. Whoever posted the video should not only have his account banned but face criminal charges.